Millions of Views From AI Music and the Secret Is in the Lyrics Not the Model

The conversation around AI music focuses almost exclusively on the models. Suno AI released a new version and the community dissects the audio quality, the vocal range, the genre versatility, the way it handles high notes or complex rhythms. Udio launches an update and the comparison videos flood social media within hours: which model sounds more human, which one handles bass better, which one produces cleaner mixes. The models are impressive, genuinely impressive, and they deserve the attention they receive. But after producing hundreds of AI tracks and watching some of them accumulate millions of views while others disappeared into the algorithmic void, the pattern that emerges has almost nothing to do with which model generated the audio. The tracks that took off, the ones that people shared and replayed and added to playlists and commented on and used in their own videos, all had one thing in common. The lyrics were good.

Not good in the literary sense. Not poetry. Not the kind of lyrics that win songwriting awards or get studied in university courses. Good in the functional sense. Lyrics that fit the genre. Lyrics where the syllable count matched the rhythm. Lyrics where the chorus was memorable enough to stick after one listen. Lyrics where the emotional tone matched the musical mood so completely that the words and the melody felt inseparable. These are the qualities that separate a track people listen to once out of curiosity from a track people add to their library and return to repeatedly. And these qualities live entirely in the lyrics, not in the model that generates the audio around them.

The AI music community has a persistent blind spot around this truth. Forum threads and Discord channels are filled with discussions about model settings, prompt engineering for audio style, generation parameters, and clever ways to coax better instrumental arrangements from the AI. These are all valid concerns, but they address maybe 30% of what determines whether a track succeeds. The other 70% is the words that the AI is singing. Feed Suno AI a poorly written verse with awkward phrasing and inconsistent meter, and the result will be a technically proficient audio track wrapped around lyrics that feel wrong in a way the listener cannot quite articulate but definitely notices. Feed the same model a well-crafted verse where every syllable lands on beat and every line earns its place, and the result feels like a real song. Same model. Same audio quality. Entirely different outcome.

What "Good Lyrics" Actually Means for AI Music

Traditional songwriting advice does not translate directly to AI music, and that mismatch trips up a lot of creators who come from a writing background. A beautifully written lyric with vivid imagery, complex metaphors, and unexpected vocabulary choices can produce terrible results when fed into Suno AI or any similar model. The reason is that AI music models generate melody and phrasing simultaneously with the audio, which means they need lyrics that are rhythmically cooperative. A seven-syllable line followed by a thirteen-syllable line followed by a four-syllable line creates rhythmic chaos that the model has to compensate for, and the compensation usually sounds like awkward pauses, rushed delivery, or melodic contortions that break the flow of the song.

Good lyrics for AI music have consistent syllable counts within each section. A verse where every line is roughly the same length gives the model a stable rhythmic foundation to build a melody on. This does not mean every line must be exactly the same number of syllables, but the variation should be intentional and predictable: a pattern like 8-8-8-6 or 10-10-8-10 gives the model enough structure to create a cohesive melody while allowing enough variation to keep the phrasing interesting. Random syllable counts produce random melodic results, and random rarely sounds good.

Rhyme schemes serve a similar structural purpose. End rhymes give the model clear anchor points for melodic resolution. When the AI encounters a rhyming couplet, it naturally creates a melodic phrase that resolves on the rhyme, which produces the satisfying sense of completion that listeners expect at the end of each line pair. Unrhymed lyrics do not give the model these anchor points, and the resulting melody often wanders without clear phrase boundaries, creating a sense of musical aimlessness that even listeners who cannot identify the technical issue will perceive as "something sounds off." The rhymes do not need to be perfect. Near rhymes and slant rhymes work well. But some kind of phonetic pattern needs to exist for the model to latch onto.

Mood alignment between the lyrical content and the genre is the third pillar. A track tagged as "upbeat pop" that contains lyrics about heartbreak and loss sends contradictory signals that the model resolves unpredictably. Sometimes the result is a strangely cheerful-sounding song about terrible things, which can work if it is intentional but usually just feels confused. The lyrics and the genre tag need to agree on what the song is about emotionally. This sounds obvious, but it is one of the most common mistakes in AI music creation: writing lyrics in isolation and then picking a genre based on what sounds cool rather than what matches the lyrical content.

The Professional Lyrics Workflow and Why It Exists

Discovering that lyrics quality is the primary determinant of track quality led to developing a structured approach to lyrics creation. The casual method of "type some lines, paste them into Suno, generate, hope for the best" produced inconsistent results even when individual lines were well-written, because consistency across an entire song requires structural planning that ad hoc writing rarely achieves. A verse that works beautifully in isolation can clash rhythmically with the chorus that follows it, and neither one is "wrong" individually. The problem is the lack of structural coordination between them.

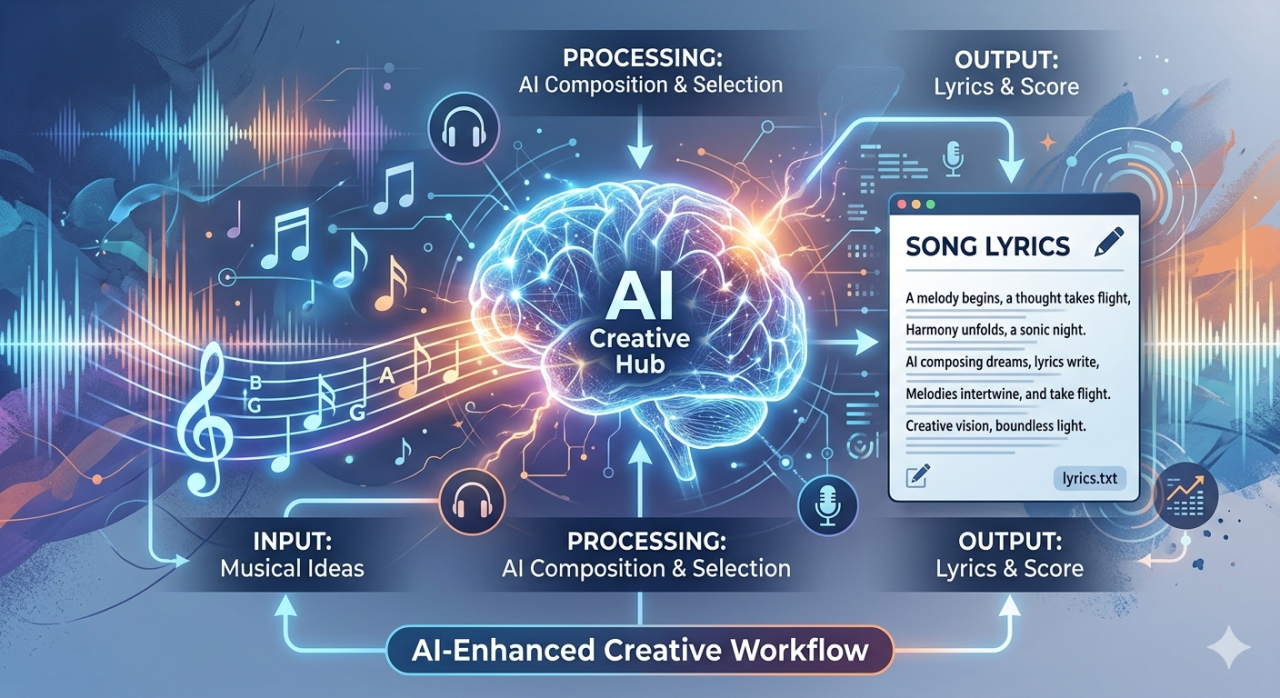

The lyrics generator at ailyrics.yeb.to was built to solve exactly this structural coordination problem. The workflow begins with inputs that define the song's identity: a topic or theme, a genre, a mood, a tone, and a set of keywords that should appear in the lyrics. These inputs establish the creative boundaries within which the AI generates lyrics that are structurally consistent from start to finish. The output is a complete song with verses, chorus, bridge, and outro, where every section has consistent syllable counts, a coherent rhyme scheme, and emotional content that aligns with the specified mood and genre.

The difference between lyrics generated with this kind of structural awareness and lyrics generated by asking a general-purpose chatbot to "write a song about summer" is dramatic. The chatbot produces text that reads well on the page but performs poorly as sung lyrics because chatbots optimize for reading quality, not singability. They favor long words over short ones, complex sentence structures over simple repetitive ones, and intellectual sophistication over emotional directness. All of these preferences produce exactly the kind of lyrics that AI music models struggle with. A purpose-built lyrics generator optimizes for the opposite qualities: singable phrasing, rhythmic consistency, emotional clarity, and structural patterns that music models can translate into compelling melodies.

The tracks that accumulated millions of views were all created with this structured approach. Topic defined first. Genre selected to match the intended audience. Mood and tone specified to align lyrics and audio style. Keywords chosen to anchor the song's vocabulary in language that resonates with the target genre. The resulting lyrics were then fed into Suno AI with minimal editing, and the model had everything it needed to produce a track that sounded intentional, cohesive, and professionally crafted rather than randomly generated.

From Lyrics to Finished Track and the Complete Pipeline

The lyrics generation step is the beginning of a pipeline that extends through audio generation, subtitle creation, and video publishing. Once the lyrics are finalized, they are formatted with section markers (verse, chorus, bridge, outro) and fed into Suno AI. The section markers tell the model where structural transitions should occur, which prevents the common problem of a model that does not know when to shift from verse energy to chorus energy because the lyrics give no structural indication of the transition.

After the audio track is generated, lyric videos are the primary distribution format for AI music on YouTube. A lyric video displays the song's words synchronized with the audio, which serves both an artistic purpose (giving viewers something to engage with visually) and a practical one (viewers who can read the lyrics are more likely to sing along, share the track, and return for repeat listens). Creating these lyric videos requires accurate subtitle timing, which is where YEB Captions enters the workflow. The caption tool takes the audio track, transcribes it with precise word-level timing, and renders the text over a visual background to produce a complete lyric video.

The entire pipeline from idea to published video looks like this: define the song concept with topic, genre, mood, and keywords at ailyrics.yeb.to. Review and refine the generated lyrics. Feed them into Suno AI with genre and style tags. Select the best generation from the model's output. Create a lyric video using the caption tool with styling that matches the song's genre and mood. Publish to YouTube with appropriate metadata. This pipeline consistently produces tracks that sound and look professional, and the results speak through the view counts. The secret was never in finding the perfect model settings or the optimal generation parameters. The secret was always in the lyrics, and everything else followed from getting the words right first.

Frequently Asked Questions

Does the AI model matter at all for AI music quality

The model absolutely matters for audio quality, vocal characteristics, and genre versatility. But audio quality is a necessary condition, not a sufficient one. A track with excellent audio quality and poor lyrics will sound polished but forgettable. A track with good audio quality and excellent lyrics will sound like a real song. The model provides the floor. The lyrics determine the ceiling.

Can general purpose chatbots write good song lyrics

General purpose chatbots can write text that reads like song lyrics but rarely performs well as sung lyrics. Chatbots optimize for reading quality, favoring complex vocabulary, long sentences, and intellectual depth. Sung lyrics require the opposite: simple vocabulary, rhythmic consistency, short phrases, and emotional directness. A purpose-built lyrics generator like ailyrics.yeb.to optimizes specifically for singability and structural consistency.

Why do syllable counts matter so much for AI music

AI music models generate melody and phrasing based on the text they receive. Consistent syllable counts give the model a stable rhythmic framework to build on, resulting in melodies that flow naturally. Inconsistent syllable counts force the model to compensate with awkward pauses, rushed delivery, or unnatural melodic shifts that disrupt the song's flow even if the listener cannot pinpoint why it sounds wrong.

What inputs does the AI lyrics generator need

The generator at ailyrics.yeb.to accepts a topic or theme, a genre, a mood, a tone, and a set of keywords. These inputs define the creative boundaries for the lyrics generation. The output is a complete song with properly structured verses, chorus, bridge, and outro, with consistent syllable counts and rhyme schemes tailored to the specified genre and mood.

How does the lyrics quality affect view counts on AI music

Tracks with well-crafted lyrics consistently outperform tracks with generic or poorly structured lyrics, even when the audio quality is comparable. Good lyrics produce memorable choruses that encourage repeat listens, sharing, and playlist additions. Poor lyrics produce tracks that people listen to once and move on from. Over time, the difference in engagement compounds into dramatically different view counts for tracks that are otherwise similar in audio quality.

Is the lyric video creation part of the same tool

Lyrics generation and lyric video creation are handled by separate tools that work together in a pipeline. ailyrics.yeb.to generates the lyrics. The audio is produced by feeding those lyrics into Suno AI or a similar model. YEB Captions then creates the lyric video by synchronizing the words to the audio with precise timing and customizable visual styling.